Mindset of a Revenue Optimization Expert – How to Stay Out of Your Own Way

Haley Spindler | Aug 18, 2020

Reading Time: 4 minutesSo often I’m asked, “Haley, if I make this change, will I see an increase in conversion rate?” No matter the change, the answer is always simple: “I don’t know.”

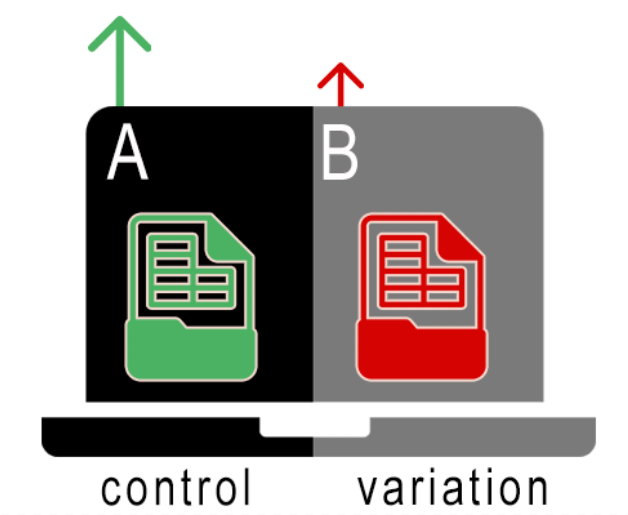

How can I be a leading revenue optimization expert with Build Grow Scale and not know what will and won’t work? It’s easy—Revenue optimization is a scientific process that requires experimentation known as A/B split testing. If you’re relying on gut instinct or preference, you’re not doing it right. It’s one of the many responsibilities of an optimization expert to test everything and fight biases.

Of course, the more experience you have doing optimization, the better you become at theorizing what will and won’t work. But you can never be certain, so every idea must be tested. Keep in mind: Numbers don’t lie, and your hypothesis has the potential to be wrong.

I’d like to impart to you the knowledge of how to think about your store so that you too can develop your intuition about—and develop processes for—how to improve your store.

In this article, I break down three foundations of thought that all great optimization experts use when they work.

1. Don’t Draw Conclusions from Anything Other Than Data

One of the most important lessons you can take from this article is that none of the decisions an optimization expert makes are hard.

At least once a day, I listen to store owners pondering a decision. They believe it takes “high-level” thinking about a slew of factors to determine if they should execute a specific change. After some back and forth, they typically ask for my opinion. Before they can finish saying, “So what do you think about it?” I blurt out: “I don’t know. Let’s run it as a test!”

The greatest thing about split testing is that you don’t have to guess what works best. You just have to look at the data for the metric you’re trying to improve to see which version had the best effect. BAM!—problem solved—because numbers don’t lie.

Of course, in time, your sense about what will and won’t work gets stronger. You’re able to say, “Well, that may not work” or “This could be awesome!” But there’s really no level reached when an optimization expert can say, with certainty, what is or isn’t going to produce a win.

I’ll say it again: Numbers don’t lie. So make your changes based on data.

2. Don’t Test Ideas on a Whim—Get Ideas from Data

Ideally, optimization experts perform data collection for each website they examine. Why can’t they just collect data from one site and use it to form hypotheses for other sites? That shortcut won’t work because each website has a unique audience and each audience performs in a unique way.

There are no blanket statements in optimization. What works for one site will most likely not be the saving grace for another.

When performing data collection and then analysis, you start to uncover site-specific challenges. For example, one of my BGS clients has a unique product for children. The product transforms from a stuffed animal into a jacket. While performing data analysis, I discovered that a significant number of people didn’t understand how to transform the product from a toy into a jacket and vice versa.

This discovery helped me develop my test idea and ended up massively increasing metrics across the board. If I had just been haphazardly testing whatever I wanted (like fonts or colors), I wouldn’t have implemented the big change that so successfully affected user behavior.

So keep in mind: Each store needs custom data collection, and unique problems require unique solutions.

If you’re interested in the tools we use to perform data collection, I recommend using session-recording software, user-testing software, and Google Analytics as a baseline for all stores. Check out the BGS blog for many more articles on data collection!

3. Kill Your Ego

It’s surprisingly easy to mold data to fit the narrative we want to tell people. So it is imperative that we, as optimization experts, don’t fall into the trap of manipulating data to say what we want it to say to fuel our egos.

So often I have to present data showing that my hypothesis did not solve the problem I intended it to … and it’s not usually fun. But I form a new hypothesis and develop it into a new test iteration and I carry on.

Post-test analysis is a crucial part of being an optimization expert. To be effective, you need to be at peace with the likelihood that you’ll be wrong a lot. If you cannot put your ego aside and admit that a test variation “lost” to the original, you are likely to implement something that has a negative effect on the metric you’re trying to improve.

If your test iteration lost, no harm no foul. Regroup and test a new iteration until the problem is solved. At the very least, admitting you were wrong is far better than the real harm of implementing a change that then fails and costs the company money.

Closing Thoughts

Revenue optimization is a big machine with lots of moving parts. It can seem daunting to learn it all, but the true challenge comes from within. Keep your biases at bay and only draw conclusions from data. Always form test hypotheses based on data collection and analysis—never on a whim. And, probably the most important point, kill your ego or you will cost your client money.

About the author

Haley Spindler

Haley is a Revenue Optimization Expert with Build Grow Scale. Optimizing underperforming pages is her passion and data is how she gets it done. Haley is quite the expert on the subject and has been dedicated to mentoring the future revenue optimizers of Build Grow Scale as the company’s Director of Training. Haley is an avid animal lover and when she’s not in the virtual office, she can be found at the beach with her dog.